Aller au contenu

Aller au contenu

Navigation

Accès directs

Connexion

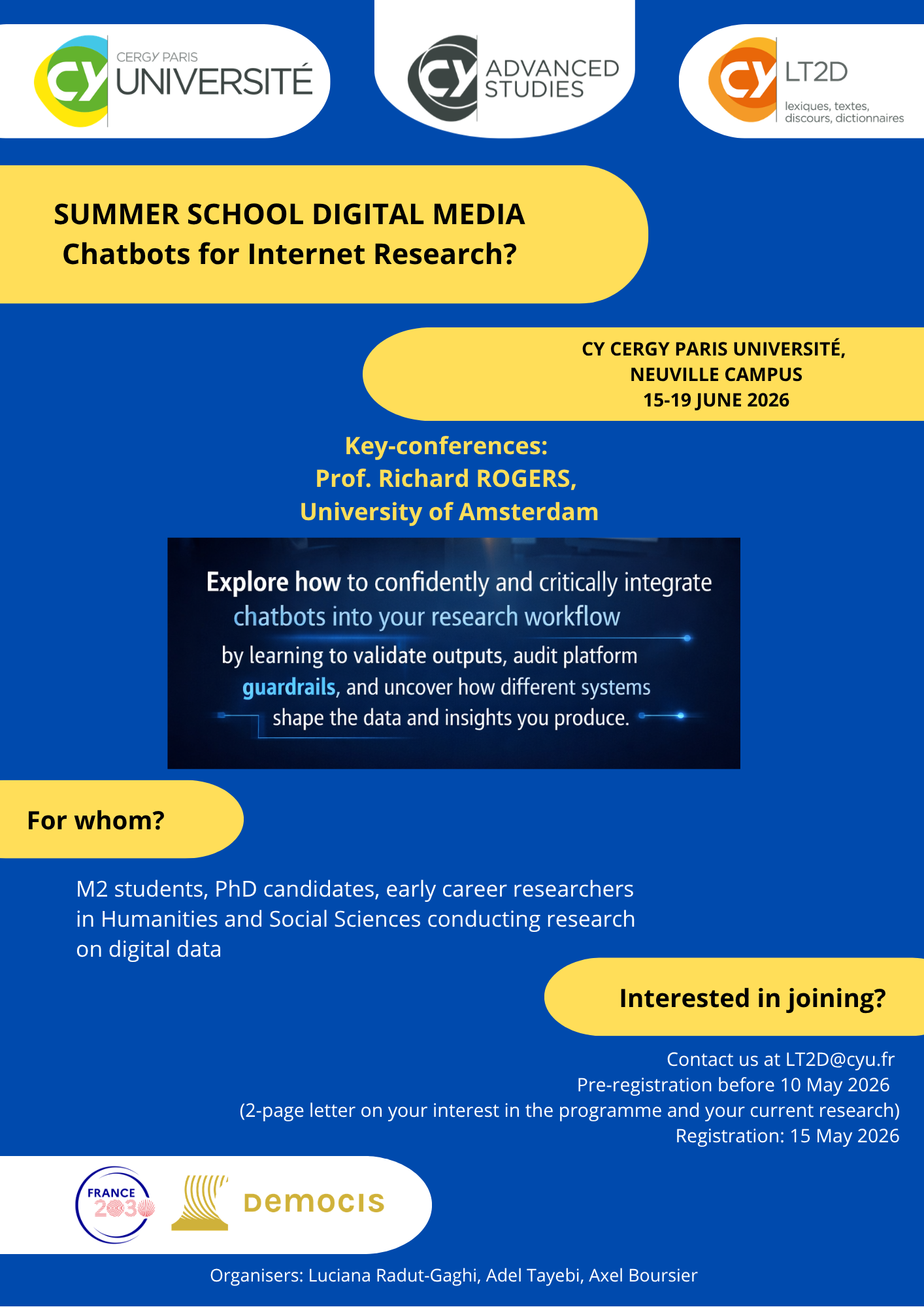

du 15 juin 2026 au 19 juin 2026

Publié le 7 mai 2026– Mis à jour le 7 mai 2026

Chatbots for Internet Research?

Lieu: CY Cergy Paris University, a dynamic university in the Parisian area. RER A station Neuville University.

https://advancedstudies.cyu.fr/manifestations-scientifiques/conferences-workshops/annee-en-cours/chatbots-for-internet-research